Working as a Data Engineer I often see that unit tests are written for the applications that are developed, but often forgotten about when doing database development. We would assume that it’s the de facto standard in 2019 if the target is to be fully DevOps as a development team.

There are many great third party tools out there that simplifies setting up the processes, most of them requires licenses. Therefore I will go back to the basics so that you cannot blame the license cost for not implementing database unit testing.

This post will focus on the core concepts around setting up an Azure SQL database using tSQLt as unit test framework. I assume that you are familiar with Azure DevOps, SQL Server Data Tools and dacpac deployments.

Please make sure that you have the following created before continuing

- Azure SQL Server with one database where it is post-fixed with CI. For example ndevdb_ci will be used throughout this post.

- Azure DevOps project connected to your favorite source control system.

Setting up the database project

As we are using SQL Server Data Tools for modelling and building the database, the Visual Studio IDE is probably a familiar sight. Create a new SQL Server Database project with the naming of your choice.

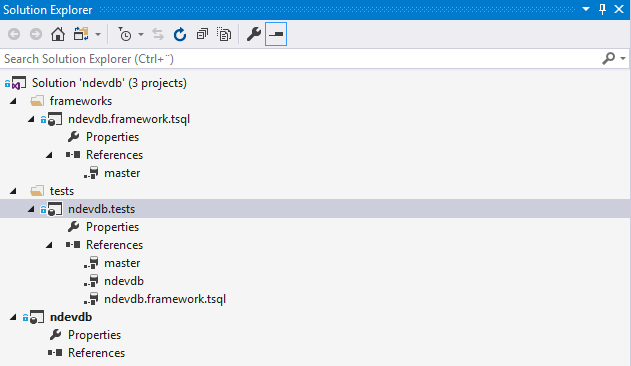

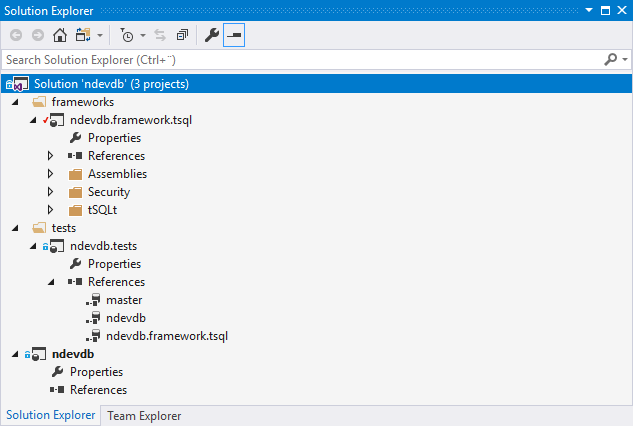

Initially we want to create a folder structure with project files that break down our solution into different parts. Make sure that all the projects are targeting Microsoft Azure SQL Database V12 if not developing on another version of SQL Server. For the purpose of this post I have created the following:

ndevdb is the project that contains our database model.

ndevdb.framework.tsql is the project that contain the tSQLt framework that is used when writing unit tests. The reason for having a separate project for the unit test framework is because we only want one place to update the definition when new versions are published.

ndevdb.tests is a composite project that will have a reference to both the tSQLt and ndevdb databases. This is the project that contains our database unit tests, and will be deployed to the CI database.

Note! The unit test project is also referring to the master database so that Azure DevOps release pipeline is able to fetch the master.dacpac file when deploying.

Note! As of 2019-02-26 SSDT contains a bug that has a hard reference to the master.dacpac file. Make sure to unload both of the projects containing the master database reference and update the include path to t he following: $(DacPacRootPath)\Extensions\Microsoft\SQLDB\Extensions\SqlServer\AzureV12\SqlSchemas\master.dacpac

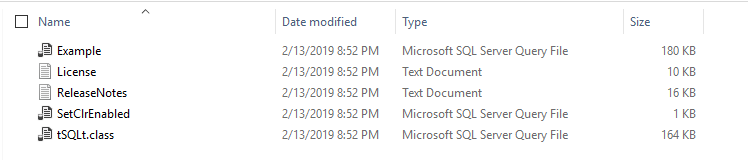

Importing the tSQLt definition

The first step is to get the latest release of tSQLt from the official website. Inside the downloaded zip file we will find tSQLt.class file that is containing the script to install the framework on a targeted database. As we will install tSQLt on a Azure SQL database, running the SetClrEnabled is not necessary and can be ignored.

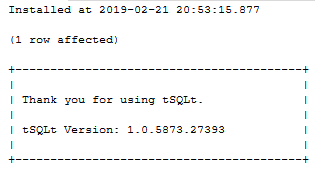

Open the tSQLt.class file in your favorite database management/query tool and execute it. When the script is done executing the installation the following message will be given.

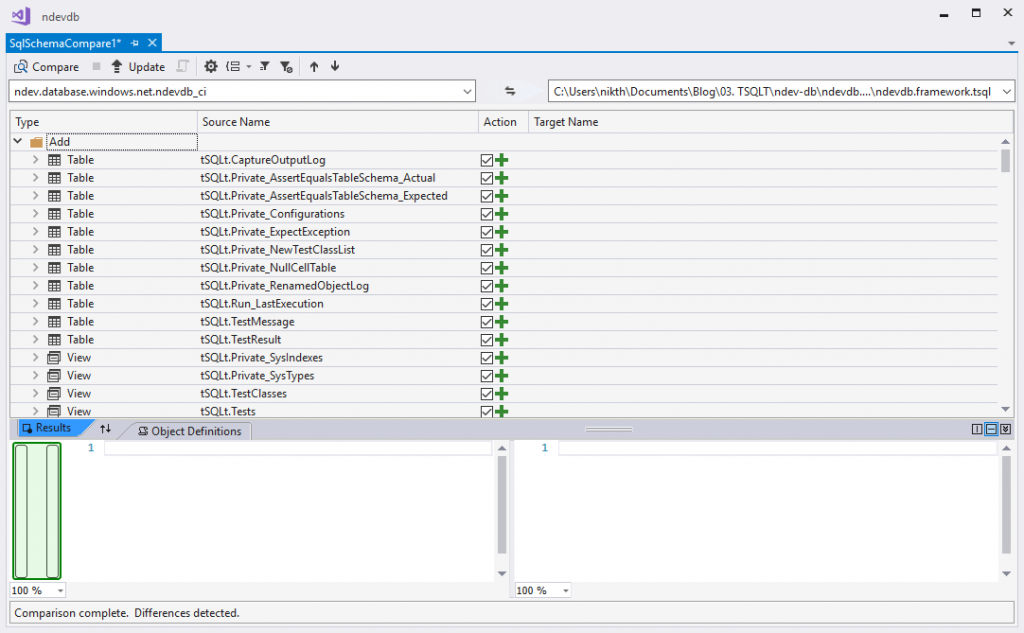

Navigate back to the Visual Studio solution and initiate a Schema Compare where the source is ndevdb_ci and the target is ndevdb.framework.tsqlt.

When the schema compare is done we will see the list of all the tSQLt objects that was installed. Click the update button to import everything to the database project and the solution should look something like this.

Note! I have yet not discovered an easy way to import the tSQLt objects without installing it on a database first, so if you know a better way please let me know in the comments below!

Create your first unit test

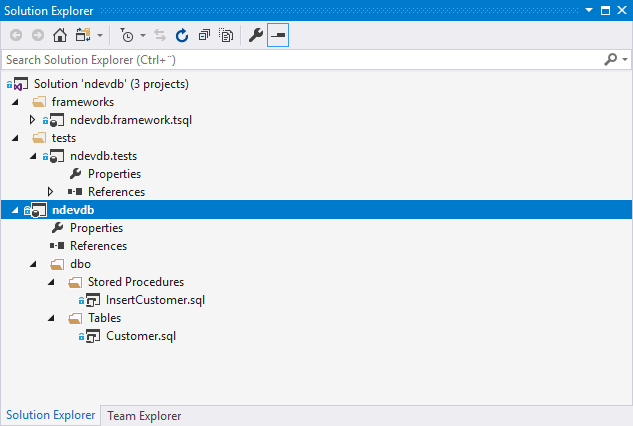

With some “television magic” I have created the initial structure of the ndevdb database with a table named Customer and a procedure to insert a customer.

The Customer table has the following definition

And the InsertCustomer procedure has the following definition

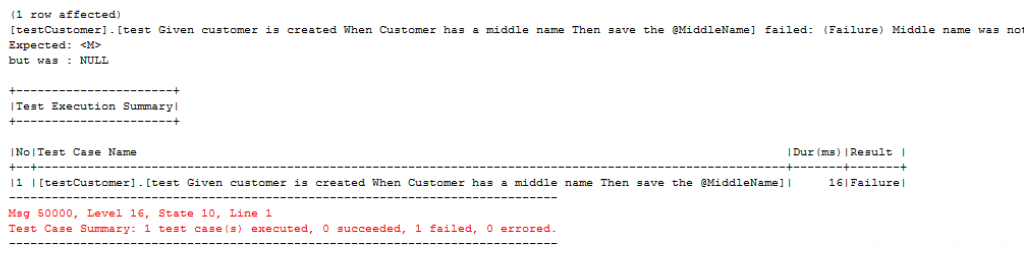

Currently the procedure for inserting a customer has no functionality because we always want to write our unit tests to verify that the acceptance criteria for the method is met. This means that our unit test always will start failing when we do the initial deployment to our ndevdb_ci database. Let’s populate the tests project with a unit test.

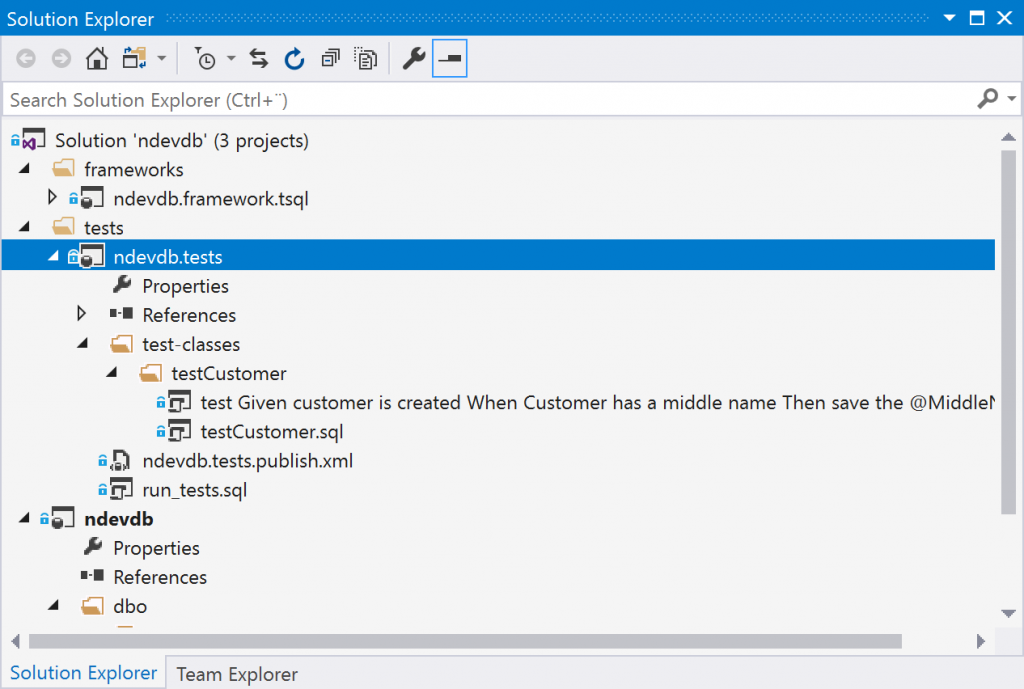

First we need to define a test-class. This is normally done using the NewTestClass method in the tSQLt framework, but to get the it compiled in SSDT we have to define it manually. Create a new SQL script that is part of the build process and name it testCustomer. Add the following content.

When the test-class is defined we are now able to write and run unit tests for that schema. Our first test will be to verify that the middle name of the customer is saved when the parameter @MiddleName has a value. Create a new stored procedure with the name [test Given customer is created When Customer has a middle name Then save the @MiddleName]. You may notice I follow GivenWhenThen style for representing my unit tests.

I haven’t written much about structuring the project, but you may have something looking like the below image. For now ignore the file run_tests.sql as it’s a topic for another post, and is often run as a post deployment step on publish.

Let’s prepare the solution for deployment using a publishing profile that also will be included in the release pipeline later. Create the publish profile with the name ndevdb.tests.publish.xml and make sure that you configure it to drop things in target but not in source.

For now make sure that the Target database connection is targeting ndevdb_ci and click the publish button. If the deployment went well make sure that the target database connection is removed and re-save the publish profile.

Navigate back to your favorite database management/query tool and execute the EXEC tSQLt.RunAll against the ndevdb_ci database. You will notice that the test case we have written is failing, but – “hey! we want the test result as a report in Azure DevOps and not as a text output?”

Configure release pipeline to execute unit tests and publish test results

Here I assume that you have most of the knowledge about setting up a build pipeline in Azure DevOps as it’s required to get the release build. These few next steps will enable us to display the test results within Azure DevOps.

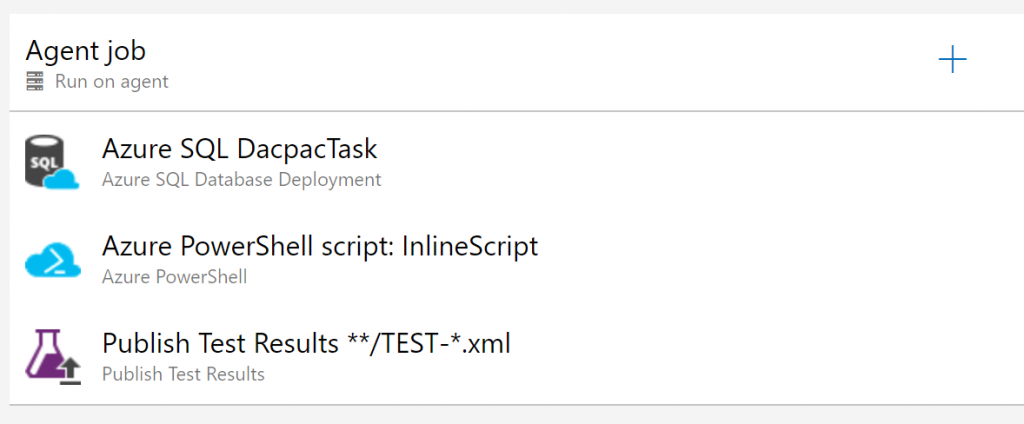

Create a release pipeline with name ndev-db-release where the artifact source is set to the output of your build pipeline. When that is done create a new stage with the name Unit Tests that will have two responsibilities

- Deploy our database based on the output of the ndevdb.tests project

- Run and retrieve the test results so they can be published to the deployment stage.

In basics this require a few tasks; Azure SQL Database Deployment, Azure Powershell and Publish Test Results.

The configuration of Azure SQL Database Deployment task is straightforward, just make sure to pick the test project (ndevdb.tests) and corresponding publish profile (ndevdb.tests.publish).

For the Azure Powershell task we will for demonstration purpose use an inline script that will connect to the database and run the necessary stored procedures. The steps for connecting are taken from the answer given on StackOverflow and modified to suit the needs for this post.

Note! I’m running the tSQLt.RunAll command as part of the PowerShell step, but this can be a database post deployment step as well.

Publishing the test results doesn’t require any configuration if the inline script is used as is. Just add the task to be executed after the Powershell script has run.

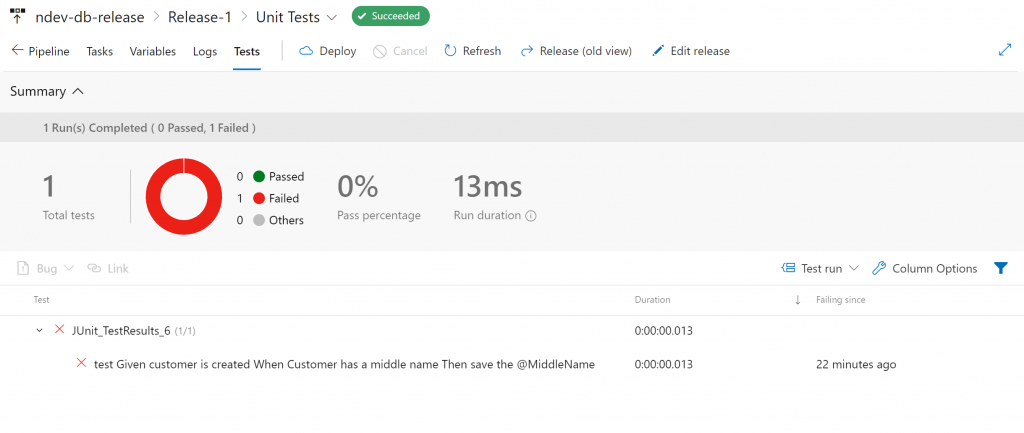

If everything has been configured correct we should be able to save the changes and create a new release. Create a new release and wait for the Unit Tests stage to complete. When the release process is done navigate inside the release under the unit test stage and click the Test tab. Neat right? Now we only need to implement the logic that satisfies the needs of the unit test.. but that’s another story 😉

Leave your comments or feedback below, I would love to get your inputs!

Hey!

I am struggling with the release pipeline and hope you can help me out. I have a Visual studio project like you have set up and an azure sql database (xx_ci) with tsqlt. In my release pipeline I have the tasks you have defined, but when I am running it, it deletes all the tables and procedures that is not defined in my test sql project. This happens in the Azure SQL DacpacTask where I have set all parameter to the azure sql database referencing the test XXX_ci database and further set the Action to Publish, the Dacpac file is the test dacpac (…tests.dacpac) and the publish profile is set to the xx.tests.publish.xml which I adjusted according to your blog post)

When this release pipeline is running it deletes all tsqlt tables and procedures, since this is not found in the test project. This is a side effect I was not expected. I hoped that it only would deploy the tests from the test project and keep all other things.

Since you have done this, I hope you can guide me through this so I can make it work. I believe I have done things what you said but I am not coming through the steps in your blog since it deletes all tsqlt things and therefore is not able to run the tests.

Hope you can read this and give me some advice

Hey,

Sorry for late reply, been quite busy before the summer hit us in Norway and then off work doing some mountaineering during the vacation time. Did you find out what was wrong? If not, I may think that the issue occurs due to some wrong reference in the test project.

1. Open the Visual Studio solution and find the test project. Open the folder references and verify that it’s referencing the tSQLt project and your database project. In my example I have two references – ndevdb and ndevdb.framework.tsqlt.

2. If the references are there, do you remember if the database location was set to same database during the Add database reference? If not, I guess this is the case. When adding database references and setting the database location as same database the build should generate a dacpack where your database will contain all objects from the tSQLt project and your database project.

Did any of the above help? If not please drop me a message here or on LinkedIn and I try help out 🙂

Thank you very much for this! I was able to get my own deploy/test/report pipeline set up. Very informative and helpful.

No problem, thank you for appreciating the post! 🙂

Hi Nicolai, Great article. Currently using this one for a mock up. I’ll blog about this later! But one issue I have is the execution of the powershell script. The build and release happens on different servers and therefore has different ip adresses. I have contineous firewall issues. I see different Ip adresses. So I was wondering how you solved that? Hennie

I’ve used now the option : “Allow access to azure services” and seems to work now.

I’ve checked the location of the Azure Devops for me and compared the ip adresses with the list provided : publicIPs_20190715.xml but can’t find any of the blocked Ip adresses.

For now it’s ok but it seems a bit unhandy to add 50 to 100 ip adresses for connecting to Azure SQL database. Even it seems that the ip adresses changes on regular base?!

I’m not an admin so I expect that I miss something here….But what 😉

Cool, Hennie! Read your blog and I’ve bookmarked it 🙂

I see your challenge and by default Azure SQL is protected by a firewall. Allowing Azure Services however will open up the database for all people running something in Azure I think. In Azure DevOps you could go to the Firewall tab for the database deployment task and choose the Auto Detect feature. Remember to check the option for deleting database rules when it’s done as well. It can be that the Azure DevOps service need to be a registered as an enterprise application (app registration) in AD with correct permissions to be able to perform this.

Hi Nikolai,

Thanks for this great post.

I am using SSDT for db unit tests. Having a problem in managing connection string securely. Whatever connection string realized at DevOps pipeline execution is physically present (and copied) in to the agent where it is running. It is a security hazard, and I want to get rid of this. Since the values of the connection string is updated during execution, I can’t see the encryption of connection string it it’s own form won’t work. Any ideas would be appreciated.

Btw, I wrote an article how the configuration looks like here: https://medium.com/@shanmugamchinnappa/securely-configuring-azure-devops-pipeline-for-sql-unit-testing-be81f4c2cc10

Hi Nikolai

Thanks so much for this, very helpful. I can’t believe this is the only place in the whole internet where someone has been able to spell it out!

That bloomin’ master.dacpac bug arrgh, thought i was going mad

thanks again

Hi Jim,

No problem! It’s a really annoying bug, and almost drove me mad as well. Glad my post helped you out

Br,

Nikolai

Hi Nikolai

I’ve gone crazy building tests with lots of success (thanks again) but now I think I’ve come across an issue with the final result powershell script, the xml test result file seems to get truncated after about 2000 characters any thoughts on how to stop that?

I’ve been able to recreate the issue by running the powershell locally

Thanks

Jim

Ah it seems that its actually tSQLt.XmlResultFormatter that’s truncating it

Hi me again, I’ve got a work around for this limitation. I changed the powershell script to get a list of the classes then execute each one and pop them all in the same file (I also have to remove the extra that the export to xml pops on each one.

Here’s the script, powershell isn’t one of my skills really so i expect someone else might look at this and think it could be done a lot better but anyway, it works (although dev ops does like to give a little delay before the results come up just to make you sweat)

$connectionString = $(ConnectionString)

$Sqlcommand = “SELECT name FROM sys.schemas where name like ‘test%'”

$connection = new-object system.data.SqlClient.SQLConnection($connectionString)

$command = new-object system.data.sqlclient.sqlcommand($Sqlcommand,$connection)

$Connection.open()

$SqlAdapter = New-Object System.Data.SqlClient.SqlDataAdapter $command

$dataset = New-Object System.Data.DataSet

$SqlAdapter.Fill($Dataset)

$Connection.Close()

$Result = $Dataset.Tables[0]

$List = @();

Foreach ($Record in $Result)

{

$sqlCommand =”BEGIN TRY EXEC tSQLt.Run ” +($Record.name)+ ” END TRY BEGIN CATCH END CATCH; EXEC tSQLt.XmlResultFormatter”

$command = new-object system.data.sqlclient.sqlcommand($sqlCommand,$connection)

$connection.Open()

$adapter = New-Object System.Data.sqlclient.sqlDataAdapter $command

$dataset = New-Object System.Data.DataSet

$adapter.Fill($dataSet) | Out-Null

$connection.Close()

$List += $DataSet.Tables[0].Rows[0].ItemArray[0]

}

$TempList1 = $List -Replace ”,”

$TempList = $TempList1 -Replace ”,”

$OutFile = “”+$TempList+””

$OutFile|out-file “$(System.DefaultWorkingDirectory)/_AzureDWH-CI/TEST-results.xml”

Thanks for the update, Jim! I was planning looking into your question this weekend, but it look like you found a work around before I got the chance.

I will update the this post at the end with your work around if you don’t mind? Else I would look into creating a issue at https://github.com/tSQLt-org/tsqlt/issues 🙂

Hey no worries, thanks to your excellent page i wouldnt have gotten anywhere without it. I notice the website dropped dropped the greaterthansymbol-testsuites-lessthansymbol and greaterthansymbol-slash-testsuites-lessthansymbol from the replaces at the end of my code so watch out go that, i think it thought i was trying to inject some code, it was the easiest way i could think of mashing the separate xml outputs together 😀

Hi Nikolai,

could you provide whole VS solution of this? I’m struggling with this for longer time. Actually Visual Studio has still problems with tSQLt schema when I choose target platform Azure SQL database. So I cannot build the solution and whole “build pipeline things are suddenly gone”.

Even if I have reference to master database errors like still happens: “SQL71501: Function: [tSQLt].[Private_ScriptIndex] contains an unresolved reference to an object. Either the object does not exist or the reference is ambiguous because it could refer to any of the following objects: [sys].[columns].[IC]::[is_included_column], [sys].[index_columns].[IC]::[is_included_column], [sys].[index_columns].[is_included_column], [tSQLt].[Private_SysIndexes].[IC]::[is_included_column] or [tSQLt].[Private_SysIndexes].[OIC]::[is_included_column].”

Btw: Thath dacpac variable problem is resolved in VS 16.4.1/2, but I cannot find the github link for it.

Hi Dee,

Didn’t notice that your message happened to be put in the spam folder, but the VS solution is published at my GitHub repo.

Please let me know if you have any issues with the sln and I will come back to you.

Hi There

Had a guy on Stack overflow give me a neater solution that uses run all which might be better for your page

$out = “”; Invoke-SqlCmd -ServerInstance “xxxx” -Database “xxxx” -Query “exec tSQLt.Runall ; exec tSQLt.XmlResultFormatter” -Username “xxx” -Password “xxxx” -MaxCharLength 1000000 | %{ $out = $out + $_[0]}; $out > $(System.DefaultWorkingDirectory)/_AzureDWH-CI/TEST-results.xml

I’ve read this post and the comments and found it very helpful for trying to implement database unit testing in the devops pipeline. I say “trying to” as I’m very close but not quite there.

When I use the Invoke-SqlCmd above I notice that if one of my tests fails tSQLt throws an error which is captured by the PowerShell and nothing is outputted to the results xml file. This seems to defeat the purpose of being able to run the tests, if the tests can only be run if they all pass. Does anyone have a solution to this?

Hi

Thanks for a great tutorial! Regarding your comment about an easier way to import tSQLt: I haven’t found a way that doesn’t involve a database, but I tried doing it without leaving Visual Sudio (2019) at least, and it worked. In your example I would right click on ndevb.framework.test, and Go to debug database. In there, choose the localdb\ProjectV13 database that corresponds to the project, eg ndevb.framework.test. Right click the db, New query, then install tSQL directly in the localdb database. Once done you can import from the localdb to the project. It’s not easier really, but at least you can do everything in one place

Hi,

The 2033 limit issue is just because the XML is spread over multiple rows. You can enumerate the rows using a foreach in PowerShell and build the file up pretty easily; code below;

$connectionString = “”

$sqlCommand = ‘BEGIN TRY EXEC tSQLt.RunAll END TRY BEGIN CATCH END CATCH; EXEC tSQLt.XmlResultFormatter’

$connection = new-object system.data.SqlClient.SQLConnection($connectionString)

$command = new-object system.data.sqlclient.sqlcommand($sqlCommand,$connection)

$connection.Open()

$adapter = New-Object System.Data.sqlclient.sqlDataAdapter $command

$dataset = New-Object System.Data.DataSet

$adapter.Fill($dataSet) | Out-Null

$connection.Close()

$data = $dataSet.Tables[0]

foreach($row in $data)

{

$row.ItemArray[0] | Out-File -Encoding Ascii -append “$(System.DefaultWorkingDirectory)\TEST-devdb.xml” -NoNewline

}

Hello! Thank you for this post. I have an issue with tSQLt in AzureDB. When I try to run tests, I get this kind of error message: Msg 50000, Level 16, State 10, Procedure tSQLt.Private_Init, Line 12 [Batch Start Line 0] Cannot access CLR. Assembly might be in an invalid state. Try running EXEC tSQLt.EnableExternalAccess @enable = 0; or reinstalling tSQLt. The same tests works perfectly within my local database on SQL Server. Would you please help me to fix his issue? Thank you in advance

Hi Kirill,

From my understanding there are two versions available at the time. And based on my assumption you have to pick the option to download that is for SQL Server 2005/Azure SQL databases. Have you downloaded the correct version? (https://tsqlt.org/downloads/).

If I remember correct I think the author of tSQLt has a case ongoing wth Microsoft, getting the new version to work with Azure SQL databases.

Hi guys, i getting this error trying while running powershell step

Exception calling “Fill” with “1” argument(s): “Could not find stored procedure ‘tSQLt.XmlResultFormatter’.”

PowerShell exited with code ‘1’.

Apparently I’ve stopped receiving notification from my site. So sorry for not replying before now.

Did you manage to resolve the issue? I assume that the error is caused by one of the folloing

– Your connection string is either pointing to the wrong database and therefore the stored procedure is not found.

– tSQLt framework is not installed on the given database.